It’s the last day of 2025 and, despite everything that happened work wise for me this year, I am coming out of 2025 very grateful. I have taken some time to write down a few reflections from the year.

There has been a dwindling number of available roles for Agile practitioners such as Scrum Masters and Agile Coaches. Yet there is still a lot of work to be done, as long as there are teams that struggle to ship software consistently and many more teams operating as a feature factory with little clue about how much value they are delivering.

Each day presents something new to learn.

These blogposts are my opinions from reflections on topics relating to my current area of interest - Enterprise Agility, Leadership, Entreprenuership, Personal Development and Complexities of Africa.

Barry Overeem, in his paper on the 8 Stances of a Scrum Master, describes the Scrum Master as a Coach, Teacher, Mentor, Facilitator, Change Agent, Impediment Remover, Manager, and Servant Leader.

While all eight stances are valuable, three of them form the bedrock of an effective Scrum Master: Coach, Teacher, and Mentor. These roles are often blended, but each serves a distinct purpose in helping teams grow and thrive.

The Scrum Master as a Coach

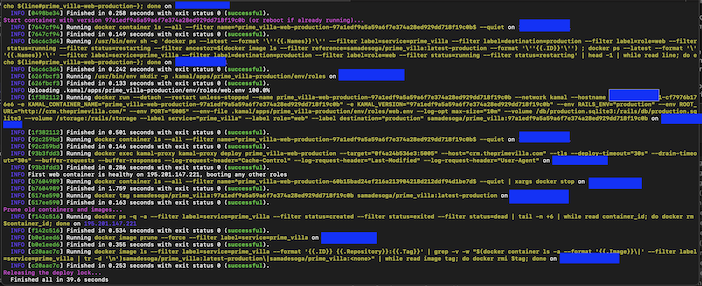

Its been over 10years that I got paid professionally to write code; however I have remained technical and one out of the many ways I try to keep myself technically competent is building web applications to manage tasks that might well be suited for excel. I prefer bespoke software over off the shelf as it’s cheaper for me and I get to keep my Ruby on Rails knowledge (my favourite web application framework) up to date.

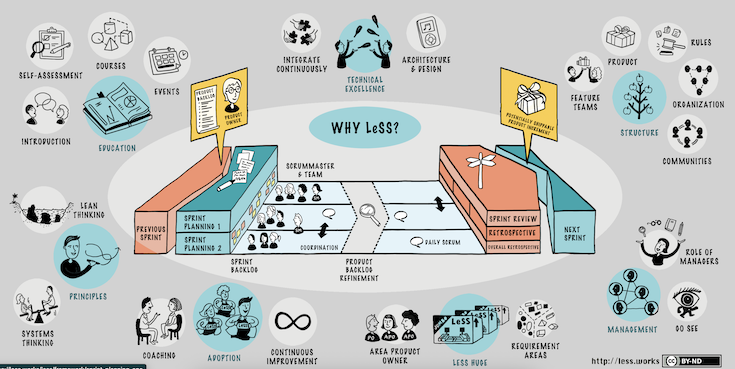

I spent the better part of 3 days last week in a Certified LESS practitioner course with the awesome Bas Vodde, one of the creators of the LESS framework. I had so many ah-ha moments, and this is my attempt to document some of the key learning moments for me over the course of the 3-day course. These are my notes, and there is a lot more context that might help you as a reader.

“Scrum would never work for us here.”

It’s a phrase I’ve heard countless times, and it often reflects a deeper resistance to change or a misunderstanding of what Scrum truly is. While it’s true that Scrum isn’t a one-size-fits-all solution, it’s an incredibly powerful framework for teams focused on product delivery. When implemented correctly, Scrum can transform how teams work, prioritise, and deliver value.

So, why do so many teams struggle with Scrum?

Product teams are under increasing pressure to deliver value that delight their customers, and proactively respond to changing market conditions. However, this will only be achieved when teams work effectively together toward common goals within a culture of trust and collaboration. While I wouldn’t recommend the Scrum Framework for all teams; I believe that the Scrum Value lays a solid foundation for building the required culture of trust and collaboration.

I often get attendees at our Scrum Training ask me for my preferred format for a Daily Scrum. I don’t know that I have a preferred format, but definitely not “What I did yesterday, what I plan to do today and discussion about Blockers”. However, if I can get you to reflect on the purpose of the Scrum Guide:

… to inspect progress toward the Sprint Goal and adapt the Sprint Backlog as necessary, adjusting the upcoming planned work.

Most Teams that regularly run a Sprint Retrospective use the dreaded quadrant, What worked well, What Didn’t work Well, Ideas and Actions (and variants of this quadrants). I am sure your team has used this format many times. I coach Scrum Masters and their team to always prepare for Sprint Retrospective, and part of planning is observing the team all through the Sprint and experiment with different formats for running the Sprint Retrospective.

I was invited to a meeting titled “Let’s hang and code”; it was for 2hrs and out of curiosity, I attended.

This meeting was an idea by the Tech Lead of a startup that I am currently working with. This is a startup where the entire team works remotely and the purpose, I learnt was to foster relationships between the team members and support people who might need help with the task they have picked up for the current sprint.

You assign tasks to subordinates and chase up for updates as soon as you feel they should have been done. Regardless of what your subordinates are currently doing, you believe they should be working on something else. You have just assigned task to a subordinate and immediately you follow up with instructions on how they should be doing it. You are so bothered about how they spend their time; and you just need to ensure that they have enough work for 40 hours a week, that’s what their contracts says anyways.